Last week I wrote a rant (or cheated at the invitiation according to Brent Ozar) about my most or least favorite datatype. I went on and on about people not using the correct datatypes for the data in their tables.

Now, it’s one thing to write about that, but something else to do something about that. And I’ve tried the latter. Because I want you, dear reader, to have the ability to show your coworker what they can change in the database. Because most of the time, people have no idea what kind of data is living in their tables. Only when problems arise. That’s the moment “we” the DBA’s get called in to fix it. Because there’s a problem with the database and we’ve got some magic moves to fix it.

To demonstrate the usage of the procedures (links at the end of the blog) I’ll create a table with some horrible datatypes, and some good ones too.

CREATE TABLE [dbo].[numbertest](

[cone] [tinyint] NULL,

[ctwo] [smallint] NULL,

[cthree] [int] NULL,

[cfour] [bigint] NULL,

[cfive] [decimal](38, 0) NULL,

[csix] [decimal](18, 7) NULL,

[cseven] [decimal](10, 2) NULL,

[ceight] char(20) NULL,

[cnine] varchar(2000)NULL

) ON [PRIMARY]

GO

Now let’s add some values

insert into numbertest values (1,1,1,1,1,99999999999.0000000,99999999.00, 'A', REPLICATE('ABCD',24))

insert into numbertest values (100,100,100,100,151,150.8800000,99999999.00, 'A', REPLICATE('ABCD',12))

insert into numbertest values (255,255,255,255,111,110.7400000,99999999.00, 'A', REPLICATE('ABCD',18))

insert into numbertest values (255,32767,32767,32767,102,102.1600000,99999999.00, 'A', REPLICATE('ABCD',36))

insert into numbertest values (255,32767,2147483647,2147483647,13,12.9500000,99999999.00, 'A', REPLICATE('ABCD',45))

insert into numbertest values (255,32767,2147483647,9223372036854775807,13,12.9500000,99999999.00, 'A', REPLICATE('ABCD',204))

insert into numbertest values (255,32767,2147483647,9223372036854775807,13,12.9500000,99999999.00, 'A', REPLICATE('ABCD',402))

insert into numbertest values (255,32767,2147483647,9223372036854775807,13,12.9500000,99999999.00, 'A', REPLICATE('ABCD',500))

insert into numbertest values (255,32767,2147483647,9223372036854775807,13,12.9500000,99999999.00, 'A', REPLICATE('ABCD',480))

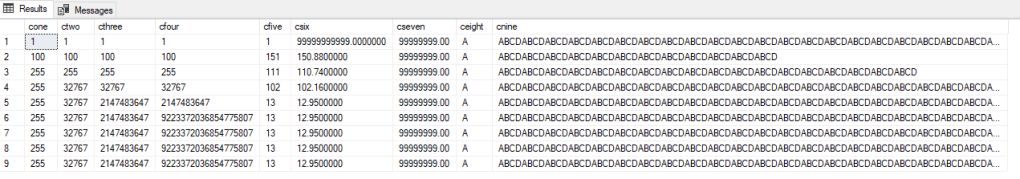

If you select all the data, it looks like this:

Now, let’s see what my procedures thinks of this table. I ran the usp_check_datalength procedure and got the data from the table it’s written the results to.

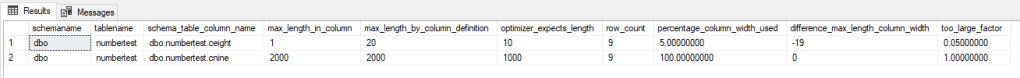

You see the two columns that are containing the char or varchar’s. The lenght by definition, the length the query optimizer expects (I’ve been told the optimizer expects data from the column to be roughly 50% of the definition, so it will reserve memory based on that expectation). The row count will give you an idea of the table size and then the part that’s most interesting. How much of the space inside the column is used. If it’s > 80%, leave it. If it’s under 30%, start a meeting to see if the size can be changed. If there’s a functional reason that the column is this large, fine. If it’s just because it might be usefull in the future, I think you know my opinion.

When you look at the ceight column, it’s defined as char(20) and filled with char(1). 19 places are left unused, usage is 5%. This is a candidate for change. The other one is a varchar(2000) but it’s filled up in at least one record. Good, keep it there or check if it needs to be increased in size. Because when it’s filled to the brim, maybe the datatype isn’t big enough.

Time to look at the numbers! I’ve run the usp_check_integers with the @schema=’dbo’ and @table=’numbertest’ parameters. One advice up front, because this procedure is a nasty bugger, don’t run it with the @schema=’All’ parameter unless you’ve got some time on your hands or it’s a small database.

This procedure will sort the results based on the free range it finds. The top one has a huge free range, and the advice is to check if a tinyint might be a better solution for this column. Because the check is based on the min and max sizes from the datasizes, it might recommend the actual data type.

The max precision column will show you the maximum value from the precision part of decimals. The second row had an decimal(18,7) datatype. But according to the current data, it could work with an 18,2 datatype. The third one could work with an int instead of a decimal because the precision part isn’t used. At least, not in the column part. Maybe it’s used in an ETL/ELT process and the decimal part is key. But that’s up to you to find out.

With these procedures, I hope i can offer you some help in analyzing your data. The code is public onder MIT license, so you can do with it what you want. If you’re using it, I’d like to hear from you. If only to know that someone is using something I’ve created :).

You can download both procedures here.

thanks for reading!