No, it’s not a weird Tavares link, and I wouldn’t go as far as calling Azure sql DB Heaven. But an actual thing i was missing the other day is the Agent that SQL Server offers when you install it on premises.

Problem

What i got into was the following. For a project we’re loading an Azure sql database (serverless) with a lot of data (think billions of rows) that has to come from an on-premises Oracle server. We’re using a vpn connection with network peering to connect to the on-premises server and using a VM with a third-party tool to load the data.

Normally we’re delta-loading the database but because it’s a new project we need to perform an initial load. Nothing really weird, just a huge number of records that needs to pass through. And every now and then the application freezes and refuses to thaw. Because it’s hard to find out when the freezing will start, we want to monitor some processes on the database.

Now on a normal SQL Server i’d create a job in the Agent and be done with that part. But not on Azure. Because the agent doesn’t exist there. In SSMS you’ll see a huge empty space where the agent ought to be.

Google it!

So, i tried to find some references that could help me out. One of the things i found was that a number of links was outdated. I’m not going to call anyone out, Azure is changing fast and keeping up with blogs is hard. Maybe by the time you read this, Azure sql db has an Agent and this is all moot.

Solution 1: Azure Automation

I had been looking into some other automation stuff and thought, well, let’s automate this job.

The process is fairly easy. You create your automation account and then design a job.

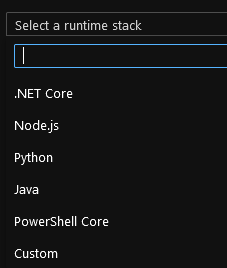

The job is in Powershell which makes it easier to test out the syntax locally.

But i hit one nasty snag. You can schedule every week, every day and every hour. But not every minute, and that was my main target.

Solution 2: Logic App

Logic app’s are a bit different to automation jobs. The follow a structured logic which can be determined in the gui or in a script. I started of with a recurring object that will run every minute. After that, i’ll add an object that starts a sql server stored procedure. But then something weird happens. The sql server object doesn’t recognize the credentials (that i didn’t enter) and can’t connect to my serverless database. It took me the best part of two hours to dig as deep as possible to find out what to do, but no luck.

If anyone can comment how to add or change the sql login to the database, i’d be happy to hear it.

Solution 3: ask Twitter

As many of you (hopefully) know, there are a lot of people active on Twitter sharing knowledge and helping out. I figured it wouldn’t hurt to ask if anyone had any ideas.

Elastic Jobs

First up came Eelco with the suggestion of an Elastic job. These are (now) in preview but it’s worth a shot! It takes a bit of time to get it working though.

First, you need to create an Elastic Job Agent. Where the documentation tells you to create one via the portal, I kept hitting errors. I have no clue why, but it just didn’t work. In the end, i tried an elevated account and with the next line of code, i made it:

New-AzSqlElasticJobAgent -name "ElasticAgent-Prod" -ResourceGroupName "rg-bla" -ServerName "sql-server-prod" -DatabaseName "sqldb-elasticjobs"

After a few minutes I got the confirmation that the agent was working and I could see it from the portal.

Now, to T-SQL to create all sorts of credentials. Well, you need two. One master credential and a job credential.

CREATE DATABASE SCOPED CREDENTIAL elasticJobCred WITH IDENTITY = 'elasticJobCred',

SECRET = 'gobbledygooky';

GO

CREATE DATABASE SCOPED CREDENTIAL MasterCred WITH IDENTITY = 'MasterCred',

SECRET = 'gobbledygooky!';

GO

Now we need to create users that are connected to these credentials AND have sufficient rights to do the stuff they need to do.

On your master database:

create login MasterCred with password = 'gobbledygooky' create login elasticJobCred with password = 'gobbledygooky' create user MasterCred for login ahpMasterCred

Then, on to the database where you’ve got your jobs, but don’t forget the other databases where the job credential should be doing work.

create user elasticJobCred for login elasticJobCred alter role db_owner add member elasticJobCred -- check role suited to needs

Now, we’ve got the basics in place, time for the next step: create a group containing the server where the work should be done

-- Add a target group containing server(s) EXEC jobs.sp_add_target_group 'WorkGroup1' -- Add a server target member EXEC jobs.sp_add_target_group_member 'WorkGroup1', @target_type = 'SqlServer', @refresh_credential_name='MasterCred', --credential required to refresh the databases in a server @server_name='sql-server-prod.database.windows.net'

Cool! We made it. Take some time for coffee before moving on. Because now the hard part starts.

-- create job

EXEC jobs.sp_add_job

@job_name ='WaitStatsJob',

@description='Collection of wait statistics',

@schedule_interval_type = 'Minutes',

@schedule_interval_count = 5,

@enabled = 1,

@schedule_start_time = '2020-09-11 21:15:00'

In my use case, i wanted to start with collecting wait stat. So, i create a job with a fitting name and description. Then the schedule with interval and a start time.

And off it goes, doing absolutely nothing because there are no job steps.

EXEC jobs.sp_add_jobstep @job_name='WaitStatsJob', @step_id = 1, @command=N' get your work done' @credential_name='elasticJobCred', @target_group_name='WorkGroup1', @output_type='SqlDatabase', @output_credential_name='elasticJobCred', @output_server_name='sql-server-prod.database.windows.net', @output_database_name='sqldb-prod', @output_table_name='WaitStats'

And off it goes! The job starts running and you’ll see the results the way you expect them.

If you’ve created jobs in the agent and need to transfer them from on-premises to this agent, script the job on-premises. The syntax is largely the same so you can copy-paste from the create script. Remember there’s no MSDB in Azure (at least, not visible or useable) so make sure you don’t copy those parts.

Function App

The second hint came from Greg Uzelac. He’s using a function app. I went into the process of seeing what a function app can do. There are a few things you can choose from, but i went with Powershell. Just because I know how to write some stuff in .NET or .NET Core doesn’t mean that’s best or safest way to continue.

Again, with powershell I can execute a command if i can reach the database. From that point I can run the command and from the Greg’s tips you can schedule a function app. The schedule you can use is written in NCRONTAB, documentation here.

The reason I haven’t finished this app is because I have to make sure that the app has no connection whatsoever with the outside world. Think sensitive data. And I don’t want any risk whatsoever.

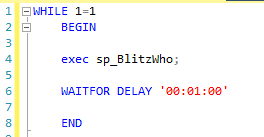

Being creative

I could also get a bit creative. I could start a query in SSMS in a while loop that will only end when SSMS stops.

While this would probably work, I need to have a VM running 24/7 to keep the query window open.

The same principle could be applied to the query editor in the Azure Portal, with the same restriction. The moment the window gets closed (and sessions in your browser will end), then the query will stop.

Even though this might work, in the end the elastic job agent is the best solution for me.

Thanks for reading!