I’m learning ADF. I know the basics of what it does, but I’m in no way really knowing of what to do. In this blog series I’ll try and record the steps I’ve followed to get to grips with basics and maybe more.

Transferring your data from on premises to Azure

I’m assuming you’ve got an Azure subscription, a resourcegroup, a SQL “instance” and a database. If you don’t, it’s quite easy to create those. For my demo’s I’m using the StackOverflow 2013 database, this one is used in more demos. As I’m working in BI, I’ll be changing the data from a normalized to a dimensional model.

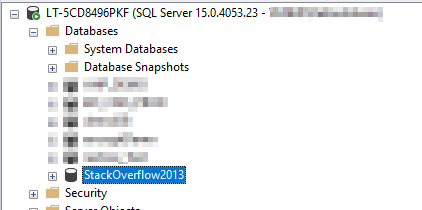

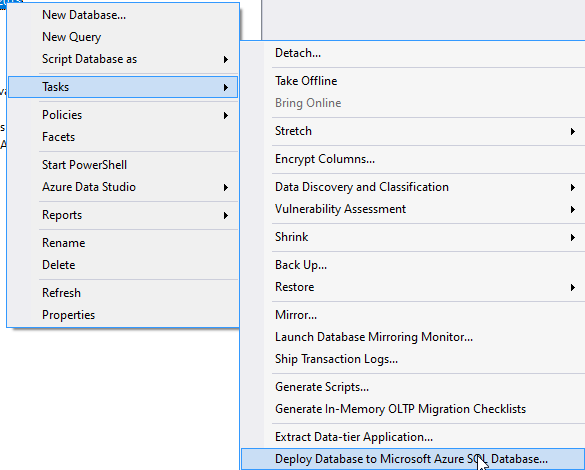

In SSMS, I’m going to select my database and try to upload it to azure. The wizard is somewhat hidden in a submenu of the database.

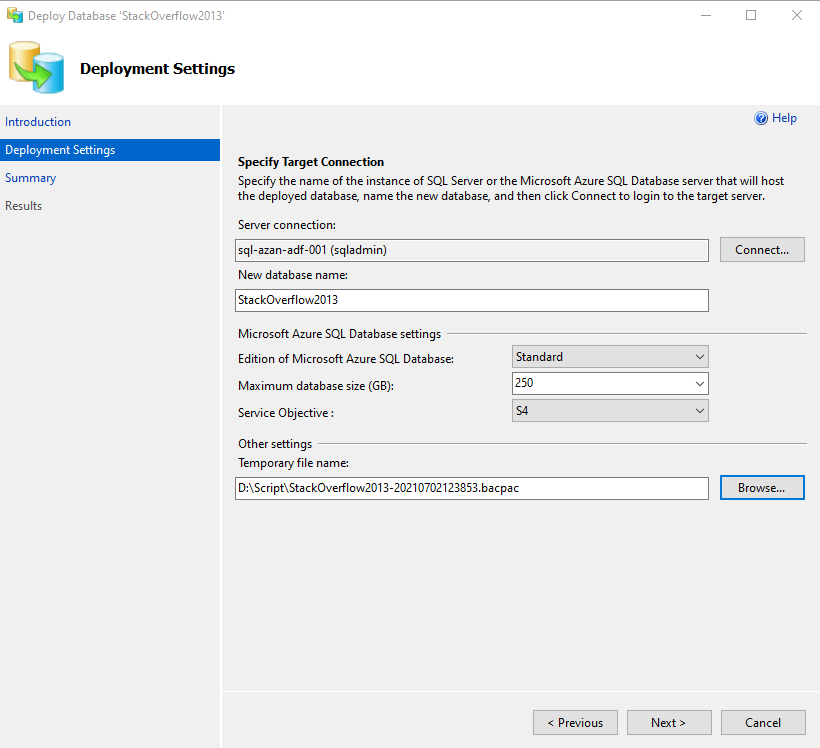

Connect to your Azure database with the correct credentials.

Choose your edition and service objective of the database

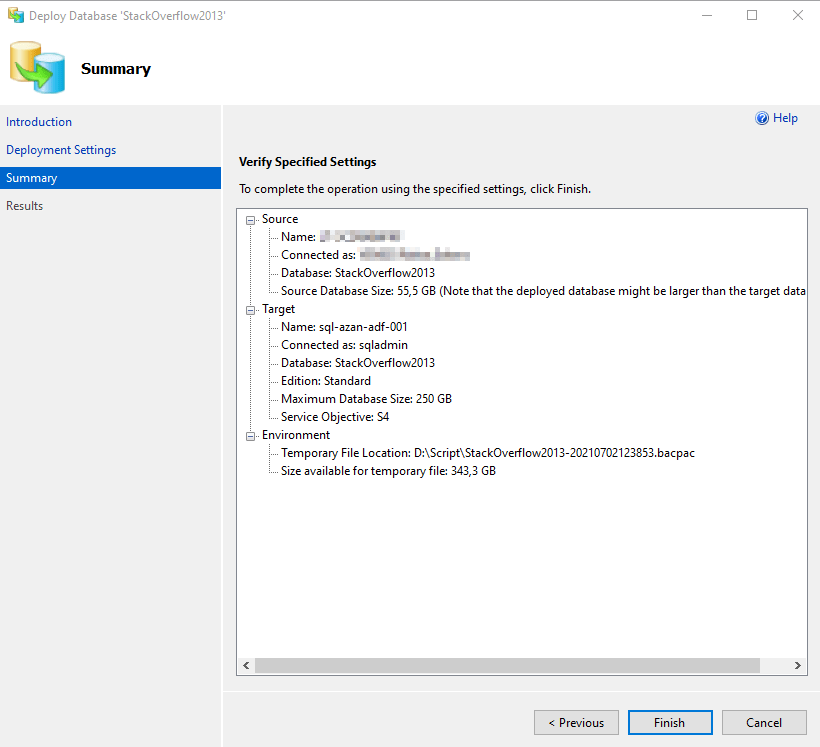

Time to go!

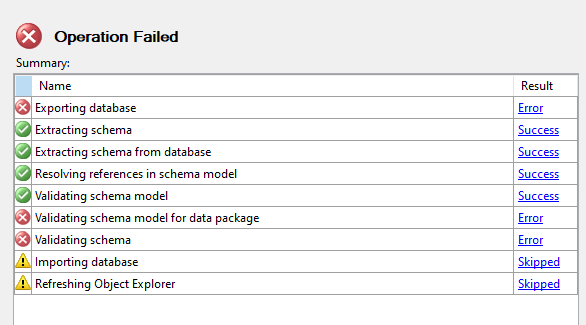

Ah, this was unexpected. Now, I can add a local SQL user, remove my Windows user from the database and go down all sorts of rabbit holes, but I don’t want to. Moreover, I know there’s another solution. But remember that if you run into a problem like this on your real data source, you might want to mitigate this issue, because the alternative is slower.

DMA

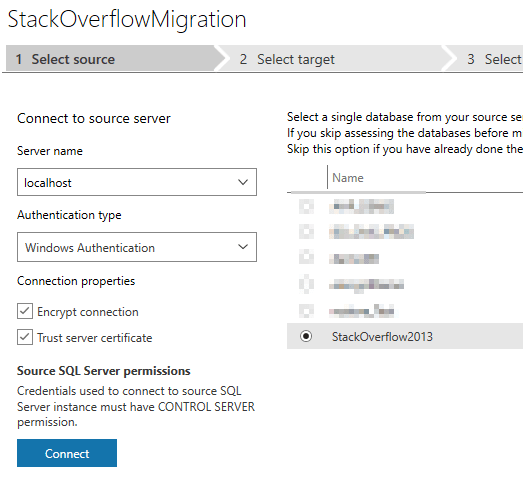

I switched over to the Database Migration Assistant. This will guide you step by step through the process.

After you create a project, select the database you want to move

Second, choose the target:

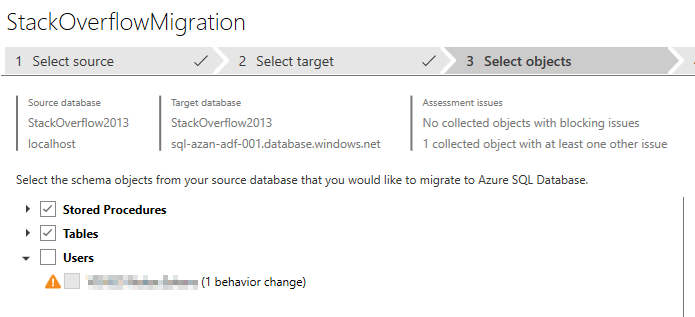

Then select what you need. In my case, I’ve excluded the users because that’s the part that went wrong.

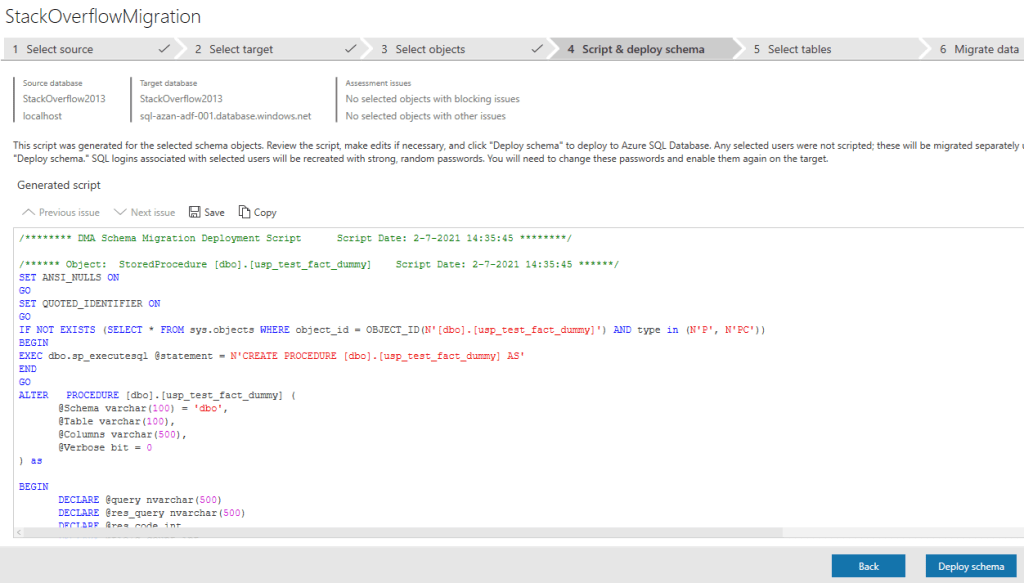

In the next step, you get the create script that creates the schemas, tables etc. You can deploy this script from this wizard. This script should finish quite quickly.

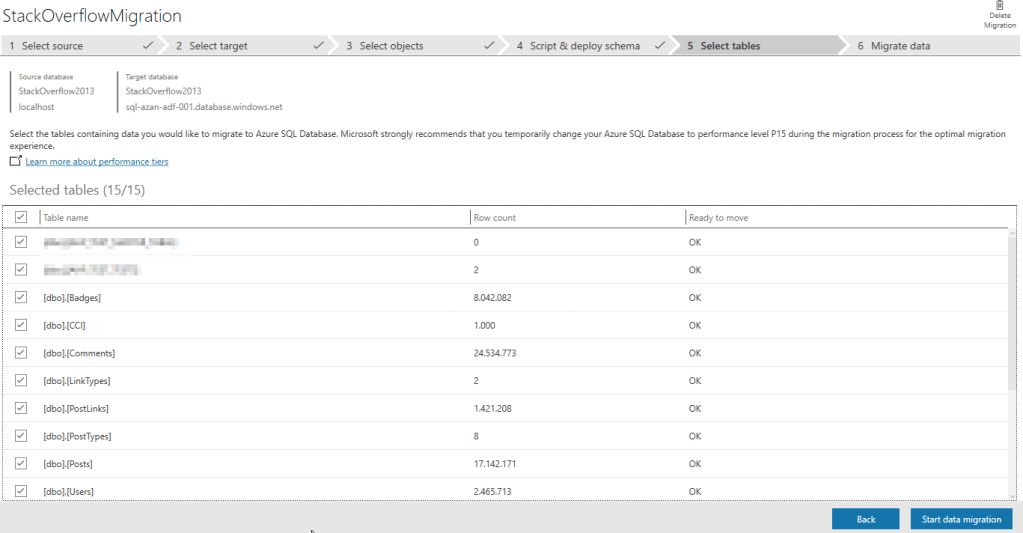

After the schema deployment, time to move the data. You can select all the tables or make some selection if you want. In my case, I’m going for all the tables.

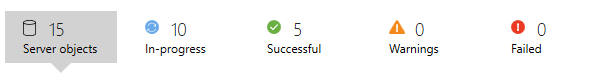

At the top of the application, you can see the overall progress.

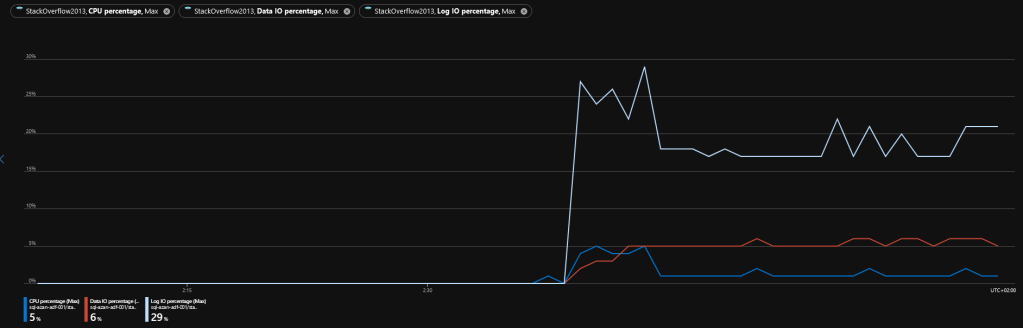

If you take a look on the Azure database, you can see the load on the database. As I’ve seen before, the log percentage is almost always the highest. As there are only inserts, the CPU can kick back and relax and because my upstream isn’t really wonderfull the upload takes some time.

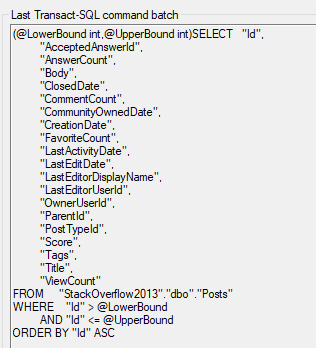

Out of interest, I wanted to see what’s happening locally. Because this isn’t the creation and restore of a bacpac file, but a continuous data stream, there will be some kind of select.

The DMA uses a delta mechanism, selecting n number of rows in a loop and inserting them into the database. Most certainly not the quickest way (a bacpac is just one file you can copy that gets restored on the Azure instance) but I can see it’s merits as well.

Now, it’s just a matter of waiting untill the data is transferred.

In my next blog, I will start with ADF. Hope to see you there!